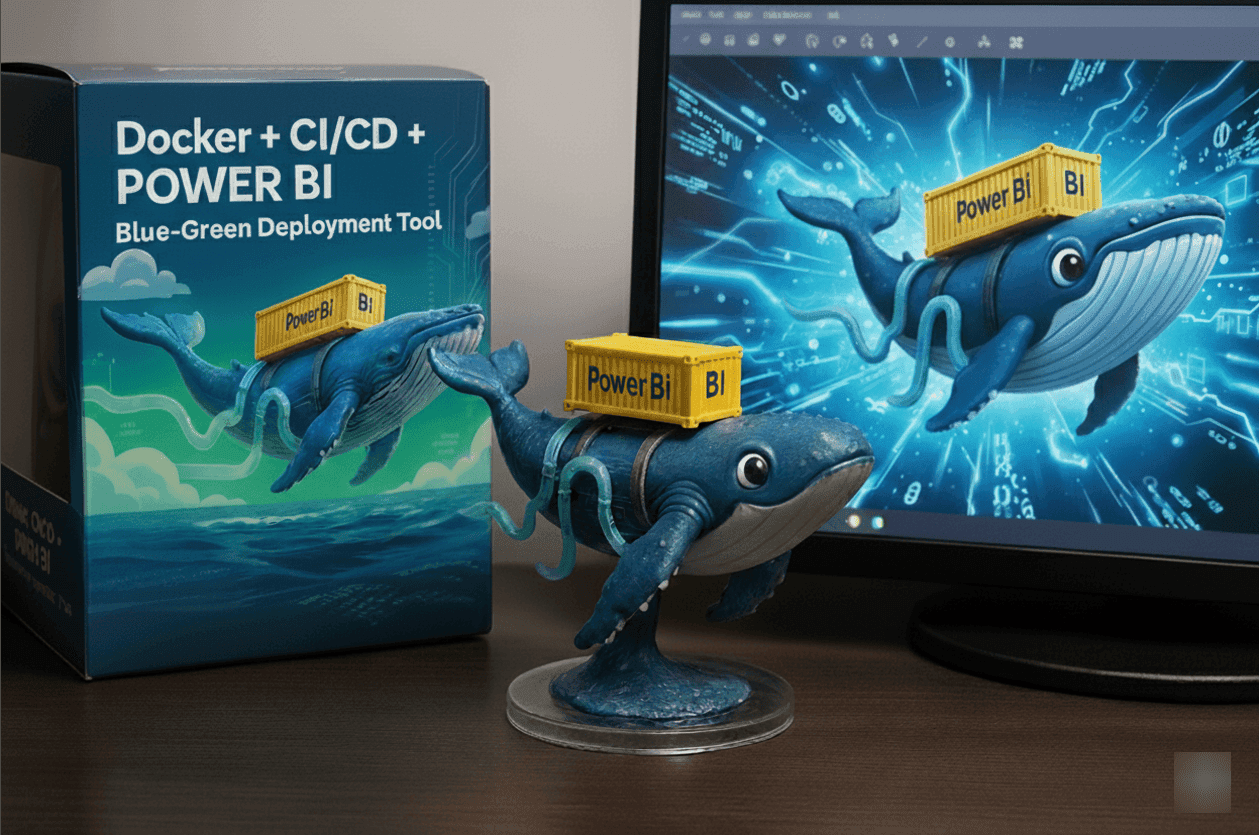

Containerized PowerBI Blue🔵🟢Green Deployment solution with Fabric CLI, Azure DevOps Pipelines + Docker

Understanding Blue-Green Deployments

Blue-green deployment is a technique that reduces downtime and risk by running two identical production environments called "Blue" and "Green." At any time, only one environment serves live traffic while the other remains idle. In traditional web applications and Azure Function Apps, this works by maintaining two complete infrastructure stacks with load balancers switching traffic between them.(Azure web app deployment slots are shown here below)

Why This Matters: Creating Parallel Production Environments

The Azure web app/function app Deployment Model Applied to Power BI

Azure App Services and Function Apps achieve zero-downtime deployments through deployment slots - maintaining two identical production environments where one serves live traffic while the other stands ready for updates. When deployment is complete, traffic switches seamlessly between environments, providing instant rollback capability.PowerBI currently missing native deployment slots, but the operational requirement remains the same. create parallel production instances that allow safe updates without impacting live business operations.

Building Production Twins for Power BI

This solution implements the deployment slot concept within PowerBI workspaces by creating identical copies of production assets:

Active Production: Current reports and semantic models serving business users

Staging Production: Parallel versions with deployment prefixes, ready for validation and switchover

This approach mirrors Azure's blue-green deployment pattern, adapted for PowerBI's workspace-centric architecture.

The Core Business Value

Organizations achieve the similar operational benefits that Azure deployment slots provide for web applications:

Continuous Availability: Production reports remain accessible throughout deployment cycles, eliminating service interruptions during updates.

Risk Mitigation: New versions undergo complete validation in production-identical environments before user exposure, reducing deployment-related incidents.

Rapid Recovery: Rollback operations execute immediately by redirecting users to previous versions, minimizing business impact when issues arise.

Deployment Confidence: Teams can execute updates during business hours with the assurance that failures won't disrupt operations.

What We Achieve

This implementation transforms PowerBI deployments from high-risk process into reliable, automated operations. Business users experience consistent service availability while IT teams gain the operational maturity and deployment confidence that modern DevOps practices provide for other enterprise applications.The parallel environment approach brings enterprise-grade deployment practices to PowerBI, ensuring analytics infrastructure operates with the same reliability standards as mission-critical business applications.Below mention high level flow of PowerBI artifacts movement.

Our approach adapts blue-green deployment principles to PowerBI's constraints using prefix-based naming conventions. Instead of separate infrastructure, we create parallel versions of reports and semantic models within the same or different workspaces, distinguished by prefixes.(If this is unclear, continue reading through the entire article)

The strategy works as follows:

Blue Environment (Current Production) marked as 1 : Reports and semantic models with standard production names (e.g., "Prod Model A", "Prod Report A").

Green Environment (New Version): Based on production workspace artifacts (1), the solution creates reports and semantic models with environment-specific prefixes (e.g., Dev-Model A, Dev-Report A(2), Test-Model A, Test-Report A(3), Prod_New_Model A, Prod_New_Report A (4)) by copying artifacts and binding them to their respective workspaces.

When deployment completes successfully, the green environment becomes the new production standard, and the blue environment serves as a rollback option.

🏗️ Solution Architecture

The solution combines several technologies to create a robust, automated deployment pipeline:

Docker Containers: Provide consistent deployment environments and easy scalability across multiple target environments.

Azure DevOps Pipelines: Orchestrate the build and deployment process with proper credential management.

Fabric CLI: Handle workspace operations like copying reports and semantic models.

PowerBI REST APIs: Manage report-to-dataset bindings and resolve object identifiers.(I initially attempted to implement this functionality using Fabric CLI's set (fab set) command, but came no success and reverted to the basics)

Service Principal Authentication: Enable secure, automated access to PowerBI workspaces.

The above image(click on the image to zoom) explains flow that demonstrates how code changes flow from development through testing to production environments using containerization and automated deployment strategies.

The diagram illustrates a multi-stage deployment process that begins with main branch code changes being processed through Azure DevOps and pushed to Docker Hub as container images. The pipeline then supports both manual and automated deployment paths, where images are pulled and deployed across three distinct environments: Development, Testing, and Production.

The workflow shows how containers are dynamically created using docker run --rm commands for each environment, with built-in authentication, copying, and rebinding processes. Each container follows a complete lifecycle from creation to destruction, with the system maintaining separate workspaces for different environments. The architecture also incorporates PowerBI/Fabric production components and DevOps variable libraries for configuration management, demonstrating a robust continuous integration and deployment (CI/CD) strategy that ensures code quality and environment isolation throughout the software delivery lifecycle.

Two Main Deployment Phases

The architecture consists of two distinct phases that optimize both developer productivity and end-user simplicity:

Phase 1: Image Building (One-Time Setup , already cooked 🍳& I am explaining how I did and what it does) When developers push changes to the main branch of the Prod PowerBI devops repo, Azure DevOps triggers an automated build process that creates a Docker image containing all deployment scripts, Fabric CLI tools, and dependencies. The completed image is stored in Docker Hub, ready for use across all environments.

Phase 2: Deployment Execution (Adhoc Operations) 🎯 This is where end users interact with the system through two simple steps to utilize the image:

- Configure Parameters: Update Azure DevOps variable groups with workspace and report specifications & Service Principal credentials

- Execute Deployment: Trigger the second pipeline, which automatically pulls the pre-built Docker image and runs three parallel containers (Dev, Test, Prod_New) that handle authentication, copying, and rebinding processes

Once the blue path establishes the Docker image through the build pipeline and the green path deploys identical containers bound to their respective workspace environments, developers can seamlessly begin their modification workflow. Starting from the development environment, teams can follow familiar PowerBI deployment pipelines to systematically promote artifacts from one stage to another.

This solution elegantly integrates with existing PowerBI deployment architecture, maintaining consistency with established practices while providing a containerized foundation that ensures environment consistency and deployment reliability

Following image illustrates the workspace artifact progression in Production: image (1) captures the baseline state prior to container deployment, with images (2), (3), and (4) showing the successive states following DevOps pipeline execution for the container releases.

Finally, we can configure the PowerBI app setup as below by assigning custom report names and managing component visibility and swapping behavior as required, replicating the flexible configuration capabilities found in function app deployments.The point is is maintaining identical reports and models across environments, enabling developers to work on reports and models without disrupting user activity or causing downtime.

Container Setup

This section touches on Docker image creation and registry publishing concepts. While I'm walking through the entire process for comprehensive understanding, end users only need to focus on the deployment execution—the image creation is a one-time setup that's already handled.

Here's the Dockerfile structure: The container includes Python 3.11 slim package as the base runtime, the Microsoft Fabric CLI for workspace operations, and the requests library for PowerBI REST API interactions. The entrypoint script handles authentication and delegates to the main deployment logic.

FROM python:3.11-slim

RUN apt-get update && apt-get install -y --no-install-recommends \

ca-certificates curl git build-essential \

&& rm -rf /var/lib/apt/lists/*

WORKDIR /app

# Install Fabric CLI and required Python packages

RUN pip install --no-cache-dir --upgrade ms-fabric-cli requests

# Copy deployment scripts

COPY deploy.py /app/deploy.py

COPY entrypoint.sh /entrypoint.sh

RUN chmod +x /entrypoint.sh

ENTRYPOINT ["/entrypoint.sh"]

For comprehensive technical documentation, including complete scripts and configuration files, refer to references section.

The ready-to-use Docker image is available on Docker Hub at https://hub.docker.com/repository/docker/nalakan/pbi_blue_green_dep_v1/general.

Build Pipeline (Image creation Process when there is a change to Prod source artifacts)

The build pipeline triggers automatically when code changes are pushed to the main branch:

trigger:

branches:

include:

- main

paths:

include:

- Dockerfile

- entrypoint.sh

- deploy.py

- azure-pipelines-build-image.yml

pool:

vmImage: 'ubuntu-latest'

variables:

- name: imageRepository

value: 'nalakan/pbi_blue_green_dep_v1'

- name: imageTag

value: '$(Build.BuildId)'

steps:

- checkout: self

# Fix line ending issues for shell scripts

- script: |

echo "Normalizing .sh files to LF line endings..."

find $(Build.SourcesDirectory) -type f -name "*.sh" -exec sed -i 's/\r$//' {} +

displayName: Normalize shell scripts

- task: Docker@2

displayName: Build and push image to Docker Hub

inputs:

command: buildAndPush

repository: $(imageRepository)

dockerfile: Dockerfile

containerRegistry: dockerhub-connection

tags: |

$(imageTag)

latest

Image Deployment Pipeline (Container Creation and Cross-Workspace Artifact Deployment)

This pipeline is manually triggered and leverages Azure DevOps variable groups for configuration management,service principal authentication and creating containers and deploying artifacts across multiple workspaces as illustrated in the referenced image.

trigger: none # Manual execution only

variables:

- group: Fabric-ServicePrincipal # Service principal credentials

- group: Fabric-DeploymentSettings # Workspace and item names

- name: imageRepository

value: 'nalakan/pbi_blue_green_dep_v1'

- name: imageTag

value: 'latest'

stages:

- stage: Deploy

jobs:

- job: DeployToEnvs

displayName: Deploy Dev / Test / Prod_New via Docker image

steps:

- script: docker pull $(imageRepository):$(imageTag)

displayName: Pull image

# Deploy to Development

- script: |

docker run --rm \

-e FABRIC_CLIENT_ID=$(FabricClientId) \

-e FABRIC_CLIENT_SECRET=$(FabricClientSecret) \

-e FABRIC_TENANT_ID=$(FabricTenantId) \

$(imageRepository):$(imageTag) \

--source-workspace "$(ProdWorkspaceName)" \

--target-workspace "$(DevWorkspaceName)" \

--report-name "$(FabricReportName)" \

--semantic-model-name "$(FabricModelName)" \

--prefix Dev-

displayName: Deploy to Dev

That's it ! The entire process(deploying the artifacts via containers) typically completes in under 10 minutes (These results are based on Azure DevOps free tier usage, so the numbers may not accurately represent enterprise-level performance benchmarks) deploying your parameterized reports across all three environments with zero manual intervention. Each environment gets properly prefixed items (Dev-, Test-, Prod_New_) that are fully functional and independently testable.

The beauty of this approach is that once the initial setup is complete, deployments become a simple parameter change and button click. No complex commands, no manual copying, no risk of forgetting to rebind reports to datasets. Everything just works as a charm.

Conclusion

This PowerBI DevOps solution uses blue-green deployment patterns with Docker, Azure DevOps CI/CD, and Fabric CLI integration. It complements Microsoft's standard deployment pipelines by adding blue-green deployment functionality that isn't natively available in Power BI. The approach enables zero-downtime deployments and instant rollbacks across development, test, and production environments while maintaining data consistency.

Acknowledgments 🙏

Thanks to Leon and Dennes @ Onyx Data UK for sparking the idea for the blue-green deployment for PowerBI. The implementation methodology and tools,techniques used shown here in this article is how I approached bringing their initial idea to life.

Resources

https://github.com/nalakan/PowerBIMeetsDocker

https://hub.docker.com/repository/docker/nalakan/pbi_blue_green_dep_v1/general

https://microsoft.github.io/fabric-cli/

Thanks for reading 📖! Hope this post added something valuable to your knowledge and sparked new ideas to explore further